I find that FTP plugin seems not support Chinese, am I correct?

How can I make it support?

Hi,

I think that it’s possible to achieve that point ,If you are familiar with Lucence .

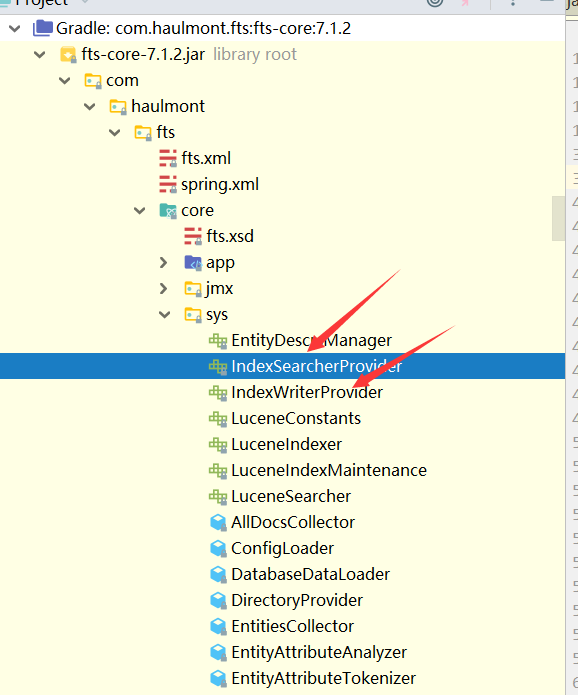

See the source code of FTS addon:

You can implement the IndexWriterProvider and IndexSearcherProvider to process Chinese.

Thanks @lugreen. I thought it an OOTB feature which can be enabled simply change a configuration. It looks like it isn’t.

Hi @Peter_Liang,

In fact,it’s easy to support Chinese .

- Create a IndexWriterProvider that extends from IndexWriterProviderBean , create an Analyzer that supports Chinese. The well known Analyzer for Chinese is IKAnalyzer. Though IKAnalyzer has no supports from original author , but there are some community edition can choose, i think GitHub - blueshen/ik-analyzer: Tokenizer support Lucene5/6/7/8/9+ version, LTS is a good choice.

- Override the built-in IndexWriterProviderBean , see 扩展业务逻辑 - CUBA 框架开发者手册 to find the way.

Thanks @lugreen. Yes, it is quite easy!! I used the same way you described. But I am SmartChineseAnalyzer instead of IKAnalyzer.

I added the smartcn dependencies and use SmartChineseAnalyzer as the Analyzer which supports both Chinese and English.

To use SmartChineseAnalyzer, I imported the lib in build.gradle.

-------------------build.gradle-------------------

configure(globalModule) {

dependencies {

...

runtime 'org.apache.lucene:lucene-analyzers-smartcn:7.5.0'

compile 'org.apache.lucene:lucene-analyzers-smartcn:7.5.0'

}

...

Then I extended IndexWriterProviderBean, overriding the createAnalyzer() method as follow.

public class IndexWriterProviderBean extends com.haulmont.fts.core.sys.IndexWriterProviderBean {

@Override

protected Analyzer createAnalyzer() {

Map<String, Analyzer> analyzerPerField = new HashMap<>();

analyzerPerField.put(FLD_LINKS, new WhitespaceAnalyzer());

analyzerPerField.put(FLD_MORPHOLOGY_ALL, new SmartChineseAnalyzer());

return new PerFieldAnalyzerWrapper(new EntityAttributeAnalyzer(), analyzerPerField);

}

}